V2EX = way to explore V2EX 是一个关于分享和探索的地方

Sign Up Now For Existing Member Sign In

推荐学习书目 Learn Python the Hard Way Python Sites PyPI - Python Package Index http://diveintopython.org/toc/index.html Pocoo 值得关注的项目 PyPy Celery a href="http://jinja.pocoo.org/docs/" target="_blank">Jinja2 Read the Docs gevent pyenv virtualenv Stackless Python Beautiful Soup 结巴中文分词 Green Unicorn Sentry Shovel Pyflakes pytest Python 编程 pep8 Checker Styles PEP 8 Google Python Style Guide Code Style from The Hitchhiker's Guide

This topic created in 2911 days ago, the information mentioned may be changed or developed.

#!/usr/bin/env python # -*- coding:utf-8 -*- import requests import re import os def getHTMLText(url): headers = {"User-Agent":"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/66.0.3359.181 Safari/537.36"} try: r = requests.get(url,headers=headers) r.raise_for_status() return r.text except requests.exceptions.RequestException as e: print(e) def getURLList(html): regex = r"( http(s?):)([/|.|\w|\s|-])*\.(?:jpg|gif|png)" lst = [] matches = re.finditer(regex, html, re.MULTILINE) for x,y in enumerate(matches): try: lst.append(str(y.group())) except: continue return sorted(set(lst),key = lst.index) def download(lst,filepath='img'): if not os.path.isdir(filepath): os.makedirs(filepath) filecounter = len(lst) filenow = 1 for url in lst: headers = {"User-Agent":"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/66.0.3359.181 Safari/537.36"} filename = filepath +'/' + url.split('/')[-1] with open(filename,'wb') as f : try: img = requests.get(url,headers=headers) img.raise_for_status() print("Downloading {}/{} file name:{}".format(filenow,filecounter,filename.split('/')[-1])) filenow += 1 f.write(img.content) f.flush() f.close() print("{} saved".format(filename)) except requests.exceptions.RequestException as e: print(e) continue if __name__ == '__main__': url = input('please input the image url:') filepath = input('please input the download path:') html = getHTMLText(url) lst = getURLList(html) download(lst,filepath) 需要 requests 库

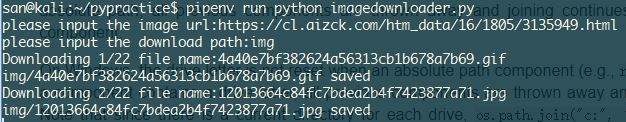

运行效果

Supplement 1 May 22, 2018

遇到个问题,图片名字一样时只会得到最后一张图片。加了个判断和随机前缀。

import random # 在 with open(filename,'w') as f 前面添加下面的代码 if os.path.isfile(filename): filename = str(random.randint(1,10000)) + os.path.basename(filename) 1 soho176 May 21, 2018 urllib.urlretrieve 这个下图片不错 你试试 |

3 mYYnSmiTEQWcCwAr May 21, 2018 via Android 没考虑中文文件名图片吧 需要 urldecode 一下 另外要不要处理文件名中的特殊符号 可能不能作为文件名的 url? |

4 liyiecho May 21, 2018 下载之类的,我觉得还是调用 aira2 来下载比较好,aria2 可以保证下载内容的完整性。如果用 python 模块下载的话,当遇到网络问题或者报错的时候,下载的内容可能不是完整的了。 |

5 ucun OP @soho176 #1 urlretrieve 下载图片坑多。图片模糊、打不开等等 ```python #!/usr/bin/env python # -*- coding:utf-8 -*- from urllib.request import Request,urlopen,urlretrieve from urllib.error import HTTPError import re import os def getHTMLText(url): headers = {"User-Agent":"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/66.0.3359.181 Safari/537.36"} req = urllib.request.Request(url=url,headers=headers) try: with urllib.request.urlopen(req) as f: return f.read().decode('utf-8') except HTTPError as e: print('Error code:',e.code) def getURLList(html): regex = r"( http(s?):)([/|.|\w|\s|-])*\.(?:jpg|gif|png)" lst = [] matches = re.finditer(regex, html, re.MULTILINE) for x,y in enumerate(matches): try: lst.append(str(y.group())) except: continue return sorted(set(lst),key = lst.index) def download(lst,filepath='img'): if not os.path.isdir(filepath): os.makedirs(filepath) filecounter = len(lst) filenow = 1 for url in lst: filename = filepath +'/' + url.split('/')[-1] opener = urllib.request.build_opener() opener.addheaders = [("User-Agent","Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/66.0.3359.181 Safari/537.36")] urllib.request.install_opener(opener) urllib.request.urlretrieve(url,filename) if __name__ == '__main__': url = input('please input the image url:') filepath = input('please input the download path:') html = getHTMLText(url) lst = getURLList(html) download(lst,filepath) ``` |

6 ucun OP @cy97cool #3 网页地址中出现中文文件名的情况很少吧,想加 encode 处理起来慢。至于特殊字符作为文件名,网页中都能解析,本地系统应该可以吧。现在遇到问题很少。等出现了再处理? |

10 mYYnSmiTEQWcCwAr May 22, 2018 @ucun 确定文件名之前还是过滤一下为好 def safefilename(filename): """ convert a string to a safe filename :param filename: a string, may be url or name :return: special chars replaced with _ """ for i in "\\/:*?\"<>|$": filename=filename.replace(i,"_") return filename |